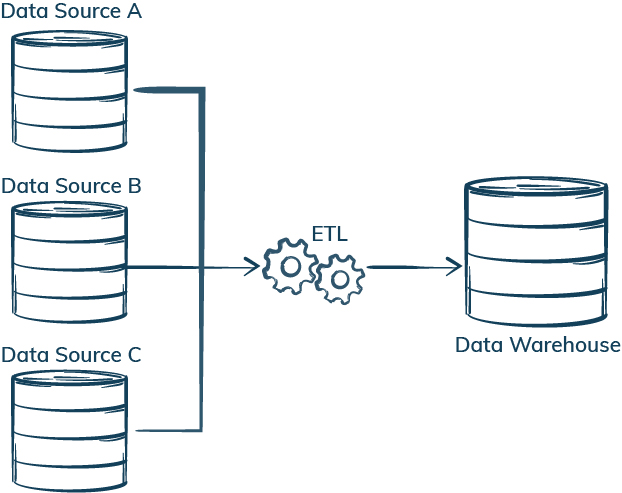

Teradata Data Validation – Validate large data volumes stored, processed and validated in Teradata systems.SAP Data Validation – Validate data used in business logic and reporting, and test data integration, export and import processes.WhereScape Test Automation – Automate test tasks for data warehouses created with WhereScape and perform health checks of the WhereScape model.Think about SAS, or Talend, or any other tool of the. Postal Address Validation – Geocoding address data and comparing it with a reliable source, such as a postal service database, to ensure its accuracy and existence. ETL tools (Extract, Transform, Load ) are software suits specialized in data processing, in high volumes.It will identify duplicate data or data loss and any missing or incorrect data. ETL refers to the group of processes that includes data extraction, transformation, and loading from one place to another which is often necessary to enable deeper analytics and business intelligence. DataOps Automation – BiG EVAL assists with automated testing and validation of data, enhancing efficiency and reliability in DataOps. ETL testing is a process that verifies that the data coming from source systems has been extracted completely, transferred correctly, and loaded in the appropriate format effectively letting you know if you have high data quality. The data transformation process is part of an ETL process (extract, transform, load) that prepares data for analysis.Testing in an automated Data Warehouse Project – Maximize the automation-degree by automating test processes in generative data warehouse projects.Data Validation and Quality Monitoring – Continuous validation and monitoring of enterprise data to ensure quality and guarantee error-free usage. To automate the entire process, your ETL tool should start QuerySurge through command line API after the ETL software completes its load process.Data Warehouse and ETL/ELT Testing – Automate testing and data validation checks for your data warehouse, data vault and ETL/ELT processes. ETL refers to the three processes of extracting, transforming and loading data collected from multiple sources into a unified and consistent database.In a typical ET L workflow, data transformation is the stage that follows data. This includes cleaning the data, such as removing duplicates, filling in NULL values, and reshaping and computing new dimensions and metrics. Data Integration Testing – Seamless quality assurance for data integration processes in development and live environments. The data transformation process is part of an ETL process (extract, transform, load) that prepares data for analysis.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed